Abstract

This study provides an application of card sorting to address challenges resulting from curricular change. Card sorting and scenario-based usability testing were used to determine the organization of online course sites for a systems-based, integrated science curriculum. The newly implemented curriculum eliminates discipline-based boundaries and focuses on simultaneous investigation of the biochemistry, histology, anatomy, and physiology of organ systems. Two cohorts of students were recruited. A cohort of second-year students familiar with the traditional, discipline-based curriculum participated in a card sort to establish the initial site organization prior to implementation of the new curriculum. A second cohort consisting of first-year students participated in a card sorting activity after exposure to the new curriculum. Scenario-based usability testing demonstrated that all participants were able to successfully navigate the modified course sites. A think-aloud protocol was employed during both card sorts to better understand participant perceptions of content and content organization. Differences in results between the two cohorts, with regard to content organization, suggest that an iterative approach to card sorting is beneficial in site construction and modification. Although the initial card sort allowed the faculty to develop a course site structure that could function well within the new curriculum, the second card sort provided insight into unanticipated navigational issues and allowed for modifications to site organization before the development of significant problems. Results suggest that repeated use of card sorting may be an effective means of creating course sites that are more focused and can more specifically meet user needs.

Practitioner’s Take Away

The following points may help usability practitioners complete a similar study:

- Identify the primary user group before making site modifications, even if other groups will be using the site.

- Use task scenarios that are relevant to the specific experience of participants within the usability test.

- Collaborate with others when developing cards for card sorting to help ensure appropriate coverage of site content and to avoid keyword bias.

- Use a think-aloud protocol during card sorting to get more specific data on participant preferences and needs.

- Don’t be satisfied with one card sort. A second card sort can help determine whether assumptions about user preferences based on the first card sort are supported or not.

Article Contents

Introduction

In Fall 2011, the University of Illinois at Chicago (UIC), College of Dentistry initiated an integrated, systems-based dental curriculum. The newly implemented curriculum eliminates discipline-based boundaries and focuses on simultaneous investigation of the biochemistry, histology, anatomy, and physiology of organ systems. For example, a student studying the human heart would learn not only the anatomic structure of the heart but also its embryologic development, what it looks like microscopically, how it functions physiologically, how it might be affected by the presence of various microorganisms or pathologies, as well as how this information is relevant, clinically. In an integrated curriculum, all of this content is explored within one course focusing on the cardiovascular system. Students undertake a similar process throughout the first year in classes devoted to the digestive system or to the musculoskeletal system, until all organ systems are explored. Subsequent years revisit this information and introduce additional applications such as pathology, pharmacology, and clinical activities. In contrast to a traditional lecture-based curriculum, small group, case (scenario)-based learning serves as the primary vehicle for instruction in the new model. Collaboration is a key component, and students work in groups of 6 or 7 to research and explore topics introduced through a series of scenarios. In the first year, student work is supplemented with laboratory exercises (such as an anatomy or histology lab) and sessions with content experts. Throughout the curriculum, student schedules are rigid and full with curricular activities running from 8:00 a.m. to 4:30 p.m. daily.

Although considerable planning led to the identification and development of numerous resources available for student use in the integrated curriculum, as the Fall 2011 implementation date neared, the faculty and administration realized that it was essential to develop an intuitive and logical way for students to access these resources. Specifically, the integrated, systems-based approach precludes the division of content into well-defined and isolated disciplines and students must locate and use course materials without the convenience of distinct categories for content organization. Additionally, faculty members from multiple disciplines would need to collaborate to set up and maintain course sites within Blackboard®, the course management system used at UIC College of Dentistry. Blackboard is an enterprise software application that provides a means to deliver digital resources to students; students can track their grades, participate in discussion groups and blogs, and take online quizzes, among many other functions. Ultimately, Blackboard course site organization within the new curriculum required a more holistic approach that would parallel the overall spirit and design of an integrated educational experience.

Some challenges for course site organization, imposed by the curricular changes, included the following:

- Allowing students to access site materials efficiently and effectively: Time wasted looking around for a resource is extremely frustrating and demoralizing for students because of the rigidity and intensity of the daily schedule and the lack of available free time.

- Creating a site organization that can be easily replicated across similar course sites for consistency: An essential part of the design of the new curriculum is seamless movement from course to course. Students explore specific organ systems within each course, but the process of exploration and the activities in which students are involved must be consistent across all courses within each year of the program. Students should not have to devote time to figuring out a new course site structure for each new course.

- Creating a system in which all faculty members involved in a course can easily understand where to upload materials: Although individual faculty members are involved in multiple courses rather than concentrating their efforts in one or two discipline-based courses, the amount of faculty time and responsibility within a single course are drastically reduced. Although students, as the primary site users, spend more time interacting with the course sites, the site structure must also allow faculty to easily provide resources to class members.

Addressing the challenges listed above and providing appropriate attention to order and consistency within and among course sites were identified as keys to a more effective engagement strategy. As most of the organizational challenges, identified above, related to student usage, and because students spend substantially more time interacting with the course sites than do faculty members or administrators, students were recruited to help determine which specific information architecture conformed best to student organizational preferences and expectations for site navigation. Usability methods, such as card sorting, were employed to obtain these data.

Card Sorting

Card sorting refers to a number of exercises in which participants group and/or name objects or concepts. The data gleaned from card sorting activities allow researchers to understand how participants develop categories or view relationships among concepts (Hudson, 2012). Card sorting grew out of Q methodology, a research method developed in the field of psychology by William Stephenson (1953), a physicist/psychologist. Q methodology arose as a technique for investigating subjectivity and has largely been used in research that hinges upon participants’ perceptions and as an important methodology for qualitative analysis (Brown 1996). Q sorting refers to the ranking or grouping of variables by participants and is the means by which data is generated for factor analysis in Q methodology.

Within the field of psychology, card sorting has been used for making inferences about participant characteristics, such as reaction time (Jastrow, 1898), memory function (Bergstrom, 1893; Bunch & Rogers, 1936), and personality assessment (Block, 1961). Later applications of sorting activities include the development of the Wisconsin Card Sorting Test (Berg, 1948), a neuropsychological test, often used clinically for identifying frontal lobe impairment (Anderson, Damasio, Jones, & Tranel, 1991) and, more recently, the use of card sorting for structuring cartographic symbols (Roth et al., 2011,). In recent years, card-sorting methodologies have frequently been employed in usability research (Nielsen & Sano, 1995) and in web design (Lewis & Hepburn, 2010). Card sorting provides a level of insight and understanding into the mental models of website users by examining how users categorize and organize website material. These data allow site developers to display content in such a way that the extraneous cognitive load required to navigate the site is minimized, and users can retrieve information from the site more easily.

Types of Card Sorting Methods

There are many different types of card sorting methods that may be employed during the design process. Which methodology is selected depends very much on the questions being addressed and, often, on the stage of design being investigated.

In an open card sort, a participant groups together cards that seem to logically belong together, and it is the participant who, ultimately, defines and names all grouping categories. Open card sorts provide a great deal of freedom to participants, allowing them to eliminate cards, to sort cards into as many groups as desired, and to develop names for each grouping.

In a closed card sort, the user is asked to group cards into predefined categories. This technique provides more structure and less flexibility to participants. This type of approach can be very useful if the goal is to modify an existing structure.

A semi-closed card sort is a variation on the closed card sort. It is conducted, initially, as a closed card sort using predefined categories but the participants can change the category names at any time.

An inverse card sort is another variation on the closed card sort and asks participants to find or rank cards within a completed structure. This strategy is often used to validate or test assumptions about information architecture.

Additionally, there are other variations, such as a Delphi method or a modified Delphi method (Paul, 2008) that allow participants to receive some feedback about the card sorting results of other participants prior to doing their own sorting.

Methods

The following sections discuss the study’s design, participants, materials used, and the procedures. This study involves two distinct card sorts, separated temporally by one year. Data from the first card sort were used to develop an initial course site, and data from the second card sort were used to modify the site.

Study Design

Card sorting and scenario-based usability tests were used to determine the organization of first-year course sites within the course management system. This study was conducted with approval from the UIC Institutional Review Board (UIC#2011-0359).

The methodology of the current study can be summarized and subdivided into five specific stages:

- Stage 1: Open card sorting activity, prior to implementation of the new curriculum, with students who had just completed their first year in the traditional program (Cohort A)

- Stage 2: Initial course site development and scenario-based usability test

- Stage 3: Implementation of the sites in the new curriculum

- Stage 4: Semi-closed card sorting activity after implementation of the new curriculum with students who had just completed their first year in the integrated (new) curriculum (Cohort B)

- Stage 5: Course site modification and scenario-based usability test

A process flow diagram of these activities is presented in Figure 1.

Figure 1. Process flow diagram demonstrating stages of the study

Participants

There were two sets of participants who conducted card sorts: one set for the initial design of the site and one set for the modification of the design one year later. Participants from two first-year dental student cohorts at UIC College of Dentistry were recruited by email for participation in the card sorting activities. Course sites needed to be finalized and ready to use prior to the first day of the new curriculum. Unfortunately, the faculty did not have access to incoming students prior to this date so initial testing was conducted using students who had just completed their first year of the traditional curriculum (Cohort A). Although these students did not have experience with the new course structure, they had participated in many of the same laboratory and clinical activities, and they more closely resembled the incoming students in terms of demographics, background, and experience than the faculty or any other potential participant group. Data from the first set of participants, Cohort A, provided input for the initial course site design.

The curriculum planning committee decided that a second card sort with participants from the first class to actually experience the new curriculum for a year, Cohort B, would provide data for the modification of the initial design.

Cohort A participants, who had completed the first year of the traditional lecture–based, discipline-based curriculum, consisted of 17 students (6 males, 11 females, average age = 24.1). Cohort B participants, who had completed one year of the case-based, integrated, systems-based curriculum, consisted of 15 students (5 males, 10 females, average age = 24.3). Five additional students from each cohort were recruited to participate in a scenario-based usability test of each site design (Cohort A: n = 5, 1 male, 4 females; Cohort B: 2 males, 3 females). Participation in the study was voluntary, and no compensation was offered.

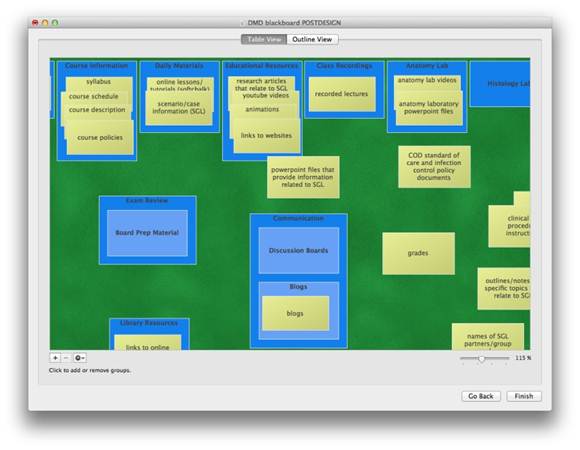

Materials

The free card sorting application for Mac operating systems, xSort© (Enough Pepper, 2012 http://www.xsortapp.com/) was used in this study. The xSort application allows researchers to set up the sorting activity. Within the application, a simulated tabletop and stacks of cards allow for open, closed, and semi-closed card sorting. Researchers input card names, and participants use a computer mouse to move cards into groups (Figure 2).

Figure 2. Card sort within the program xSort (figure use and adaptation permission from xSort, http://www.xsortapp.com/): Participants group information together and can create hierarchies within the information architecture.

Regardless of whether an open, closed, or semi-closed sort is designated, xSort allows participants to leave some cards unsorted if they are unsure of how to group them. Any unsorted cards are excluded from the analysis.

Faculty members on the curriculum planning committee worked together to identify card names for the open card sorting activity. Card names were based on materials typically included in Blackboard course sites for first year dental students at UIC. The existing literature recommended using no fewer than 30 and no greater than 100 cards in order to make the most effective use of participants’ time (Spencer, 2009). Following those guidelines, faculty developed 45 cards, each representing one type of content typically found in a course site for first year dental students. The committee reviewed card names and discussed each in detail to ensure that each card represented a single type of content and that there was minimal or no overlap among card names.

One challenge of card sorting is avoiding keyword bias, or card names that might influence the sorting. For example, having a set of cards that included “anatomy videos,” “histology videos,” and “physiology videos” might bias a participant towards grouping them together into a “videos” category purely based on the card names, rather than on concepts that may be more important to the participant. In this study, card names were selected to minimize keyword bias as much as possible, and the author changed the word order or used synonyms to represent content whose title closely resembled the title of other cards. Descriptions were used as often as possible so “anatomy videos” might change to “recordings of laboratory dissections,” for example. Card names were then entered into xSort.

Procedures

The following sections discuss specific procedures for each stage of the study.

Stage 1: Open card sort with Cohort A

The 17 participants from Cohort A were recruited by email. Summary statistics regarding self-reported gender and age of participants, in addition to the number of groups formed by each participant and the time for completion of the card sort, were collected in xSort.

During the open card-sorting activity Cohort A participants grouped related cards into categories and subsequently named each category. Participants had the ability to form as few or as many groupings as they desired and also had the option of removing cards from the sort. Within this open card sort, hierarchies were unrestricted and participants were able to group cards into subcategories. The author observed the participants and asked them to think-aloud, talking through the processes involved in grouping cards and naming groups. After sorting all cards, participants ranked the groups in order of importance. A faculty member took notes to record all observations, and each participant’s movements through the card-sorting program were captured with the screen-capture software Camtasia Studio® (http://www.techsmith.com/camtasia.html).

Stage 2: Course site development and scenario-based usability test

Using the categories and information architecture gleaned from Cohort A’s open card sort, the author, with assistance from the Office of Dental Education at UIC, constructed a template course site in Blackboard.

Five additional participants from Cohort A, who had not participated in the open card sort, were recruited via email to test the template site. Participants were asked to complete nine task scenarios related to navigation through the site as shown in Table 1.

Table 1. Task Scenarios for Usability Test with Cohort A and Cohort B

Stage 3: Implementation of the site in the new curriculum

The author (with assistance from the UIC Office of Dental Education) constructed all biomedical sciences course sites for the 2011-2012 academic year using the template site and the feedback from participants in the usability test. The new first-year class (Cohort B) used the newly constructed sites throughout the 2011-2012 academic year. Cohort B students also received a brief orientation demonstrating how to navigate the sites at the beginning of the Fall 2011 semester.

Stage 4: Semi-closed card sort with Cohort B

At the end of the 2011-2012 school year, 15 participants from Cohort B were recruited via email to participate in a semi-closed card sort. The purpose of this second card sort, separated temporally from the first card sort by one year, was to determine what modifications should be made to the course sites to make them more consistent with user needs. The first card sort was necessary to provide a starting point so that the course sites could exist prior to the start date of the new curriculum. The second card sort provided an opportunity to see if our conclusions about the organization and our predictions about what would make sense to students in the new curriculum (all based on data from the first card sort) were supported or not.

Cohort B participants were asked to group the same cards as in Cohort A’s open card sort. In the semi-closed card sort, however, Cohort B participants were provided with the names of the consensus categories appearing on the course sites and were asked to place cards within the named groups. Cohort B participants were able to add new groups or delete or rename existing groups. As with the Cohort A card sort, after sorting all cards, participants ranked groups in order of importance. All participants were observed and all movements within the card sort were captured using Camtasia Studio®. Data from the semi-closed card sort were subjected to the same treatment as the open card sort data.

Stage 5: Course site modification and scenario-based usability test

The course template site was modified to reflect results from the Cohort B semi-closed card sort.

Five participants from Cohort B who did not participate in the card sort were recruited by email to participate in a scenario-based usability test. Task scenarios were the same as in the first usability test (see Table 1).

Results

The following sections provide results for the card sorts for both Cohort A and Cohort B.

Cohort A, Stage 1: Open Card Sort

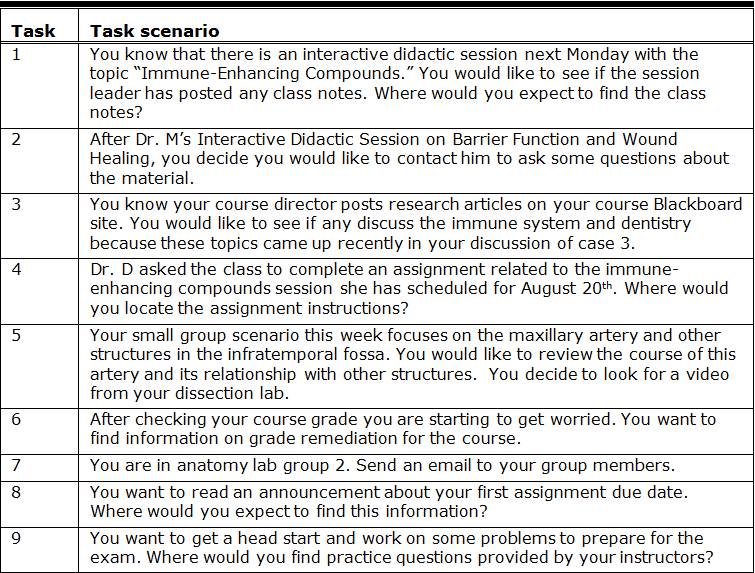

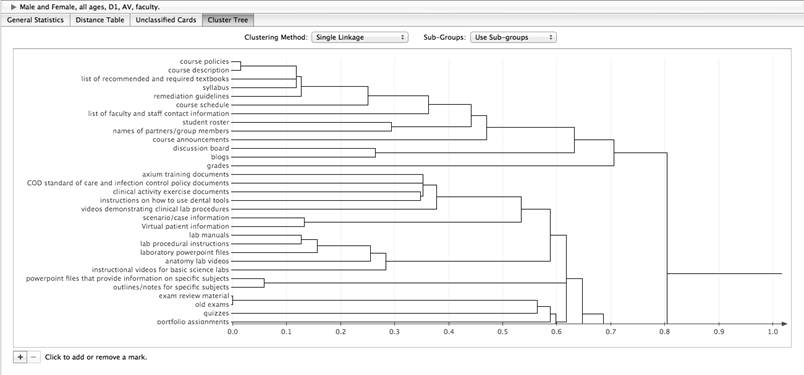

Results of the card sorts formed a distance table and a cluster tree within xSort. The distance table (Figure 3) indicates the normalized distance between all cards, so that cards always grouped together receive a value of 0 and cards never grouped together receive a value of 1. The cluster tree analysis (Figure 4) depicts the most common groupings so that cards clustered more closely to each other are, on average, grouped together more often by participants than cards spaced further apart on the cluster tree, also called a dendrogram.

Figure 3. The distance table created in xSort (figure use and adaptation permission from xSort). Cards always grouped together receive a value of 0, and cards never grouped together receive a value of 1.

Figure 4. Cluster tree created in xSort (figure use and adaptation permission from xSort). Cards clustered more closely to each other are grouped together more often by participants than are cards spaced further apart. As you move from left to right in the dendrogram, the groupings are weaker but include a larger number of cards within each group.

After the open card sort, the author established (using xSort) which cards were grouped together most often by participants. Results of Cohort A’s open card sort revealed that Cohort A participants preferred to keep the biomedical sciences materials separate from clinical materials. In fact, Cohort A preferred to have two completely separate course sites for these different parts of the first year curriculum, and clinically related cards were often left as unsorted cards. Alternatively, if clinically related cards were sorted, participants noted during the think-aloud process that these clinical groupings should appear on a separate site.

Cohort A participants consistently ranked content that had to be accessed most frequently throughout the semester of higher importance than content that would only be accessed occasionally or infrequently. Interestingly, in the think-aloud protocol participants tended to make clear distinctions between required and optional resources. If a resource or material was indicated as required most participants wanted to have it grouped with other required material and organized by the date in which that topic would be covered in class. If a resource or material was indicated as optional or supplemental, participants ranked it as lower in importance and grouped it with other optional materials. These supplemental resources were often arranged in subgroups by content topic, rather than by date covered, and several participants questioned the value of including content that was not required.

Cohort A, Stage 2: Site Development and Usability Test

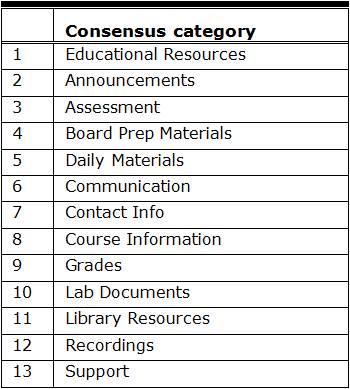

The author used these data to develop 13 group names that reflected the primary theme of each group (Table 2). For example, if discussion boards and blogs were consistently placed within the same group by the majority of study participants but some participants named this group “blogs and boards” and others named it “conversations,” a consensus group name might be “communication.” In some cases, all participants used the same category name (this was true for “announcements” and “grades”). For other categories, participants may have grouped similar items together but arrived at very different category names.

Table 2. List of Categories Created After Open Card Sort With Cohort A

Much of the interpretation and identification of the themes used to construct consensus categories came out of the think-aloud protocol. The fact that faculty members took notes during these sessions, as well as screen captured participant movements, enabled the author to go back and review participant explanations of the names they provided to each category and the motivation for using a specific name and for grouping certain items together. For example, the consensus category “educational resources” was called “supplemental material,” “enrichment,” “extra resources,” “extra educational materials,” and “articles and recordings” by different participants. The author decided upon the category title “educational resources” because all participants viewed these resources as clearly distinct from the essential resources required for their sessions with faculty. Required materials were put in the category “Daily Materials” which was called “class materials,” “lecture stuff,” and “course materials” by different participants. The author decided upon the name “Daily Materials” to avoid the use of the term “lecture,” which is limited in the new curriculum and to support participants’ emphasis on the importance of frequency of access. The consensus categories formed the basis for the Blackboard course site navigation menu. In some cases, it was necessary to deviate from card sort results alone and rely on faculty experience and knowledge about the intended structure of the new curriculum. Some examples include the following: (a) Although most participants preferred the elimination of “Blackboard support,” it had to be retained as a default category in Blackboard; and (b) because of additional laboratory activities in the new curriculum, the term “Lab Documents,” used by numerous participants, would be too broad and inclusive to be useful so materials were subdivided into specific lab categories such as Dissection Lab and Microanatomy Lab.

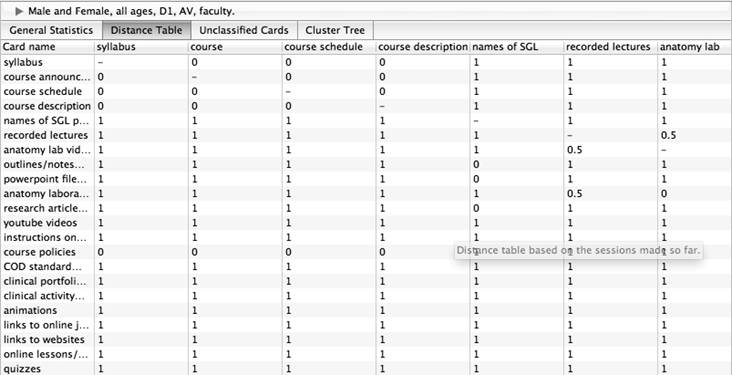

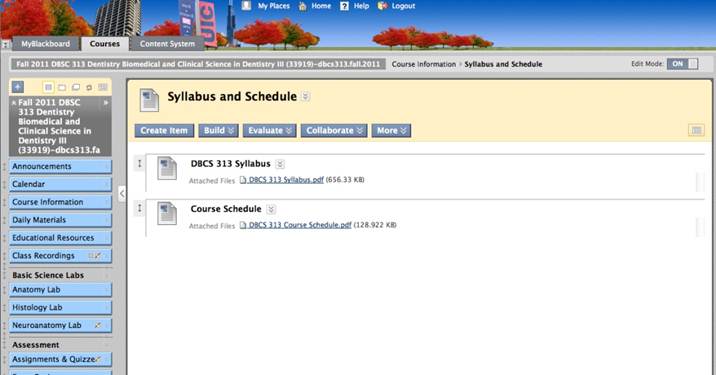

Using the categories and information architecture gleaned from Cohort A’s open card sort, the author, with assistance from the Office of Dental Education at UIC, constructed a template course site in Blackboard (Figure 5).

Figure 5. The initial integrated course site developed using results of the Cohort A (traditional curriculum) card sort (figure use and adaptation permission from Blackboard Inc.)

Within the course site navigation menu bar, the consensus category names became the menu tabs and the cards within each category translated to folders that appeared when clicking on a given tab. This organization reflected the hierarchy revealed through the card sort, wherein the category cards (named by the participants) were located higher in the hierarchy than the individual cards. Because Cohort A also placed a high amount of importance on frequency of access, required date of access, relevant discipline, and medium (as revealed through the think-aloud protocol), some of the menu bars (based on the consensus categories) were further subdivided.

Usability testing

The five Cohort A participants recruited for the scenario-based usability test were able to successfully complete all of the assigned task scenarios. Cohort A participants were able to navigate through the template site and complete each task scenario without assistance from the faculty observer within 1 minute per task scenario.

Cohort B, Stage 4: Semi-Closed Card Sort

The second card sort was necessary to determine if the site organization continued to logically and consistently represent course activities after a year of use. Additionally, comments from both students and faculty indicated that there were unanticipated issues with the initial site design. For example, Cohort A’s open card sort suggested that students preferred to have content grouped by discipline related activities (anatomy, physiology, biochemistry) and then by medium (PowerPoints, videos, notes, animations). After a year in the new curriculum, Cohort B students disclosed that the volume of available resources on the course sites “often overwhelmed them” and that organization by medium within a given activity did not allow them to quickly find potentially helpful materials. Cohort B students also complained that they would have preferred to have materials organized into groups based on the corresponding case or scenario to which each resource related, rather than by whether the material was accessed more or less frequently, a factor that had been very important to Cohort A students, based on the think-aloud part of the open card sort and subsequent usability test with the initial site.

Results of the semi-closed card sort also produced a cluster tree and dendrogram. Results of Cohort B’s semi-closed card sort revealed that, even after using Cohort A’s open card sort data for template site organization, the course site structure still required modification in order to better align with the structure of organization expected by student users in the integrated curriculum. The think-aloud protocol used during both card sorts also suggested that Cohort B preferred a more complex architecture wherein the organization of resources on the site, regardless of whether they were initially organized by activity or by date, maintained a connection in name to the case scenarios that formed the basis of the small group learning sessions in the new curriculum.

Consistent with the Cohort A students, the majority of Cohort B participants believed that clinical materials should be housed on a site distinct from the biomedical sciences materials. Also consistent with Cohort A, Cohort B ranked content accessed most frequently as higher in importance than content accessed infrequently. During the think-aloud part of the card sort, however, Cohort B participants did not, specifically, mention frequency of access as a factor in their sorting, while Cohort A participants did.

Results of the Cohort B card sort and usability test differed from those of Cohort A in a few key ways:

- Cohort B preferred to group content as it related to specific activities rather than according to specific disciplines or media.

- Cohort B participants wanted some sort of connection in nomenclature between all site content and cases/scenarios used in the small group learning activity (small group learning is the primary vehicle for learning in the new curriculum and occupies the majority of student time).

- Cohort B participants were less reluctant to include supplemental or non-required content than were Cohort A participants.

Cohort B: Site Modification and Usability Testing

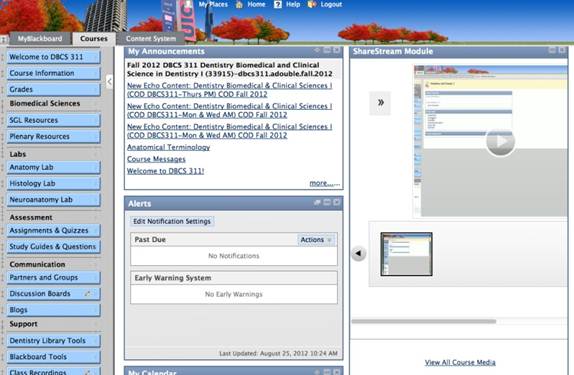

Modifications based on these results included name changes for several groups, consolidation of some content, and the incorporation of additional information on the course home page. The modified course site is shown in Figure 6.

Figure 6. The modified course site developed using results of the Cohort B semi-closed card sort (figure use and adaptation permission from Blackboard Inc.)

Specifically, the “Educational Resources” category, containing supplemental or non-required videos, PowerPoints, recordings, and animations, was changed to “Small Group Learning (SGL) Resources” and then further subdivided by case/scenario. “Daily Materials” was changed to “Plenary Sessions” and then further subdivided by date. These changes reflected the emphasis placed by Cohort B on grouping content by activity (plenary sessions, small group learning) rather than by discipline yet maintained the priority both cohorts placed on being able to access content for specific sessions with faculty based on date of access. The “Assignments” category was further subdivided by relevant case/scenario so that all assignments or quizzes related to one of the specific cases/scenarios with which the students were working in their small group learning activities.

Usability testing

The five Cohort B participants recruited for the scenario-based usability test of the modified site were able to successfully complete all of the assigned task scenarios. Cohort B participants were able to navigate through the modified template site and complete each task scenario without assistance from the faculty observer within 1 minute per task scenario.

Discussion and Conclusions

The results of the Cohort A open card sort were somewhat surprising to the curriculum planning committee. Anecdotal evidence suggested that both students and faculty members preferred to organize material by discipline, by topic, or by medium. In fact, prior to the first card sort, the planning committee suggested that sites should be organized by discipline within each didactic topic and then by medium because this seemed to make the most sense to faculty members, based on prior experience with Blackboard course sites. However, the Cohort A open card sort revealed that they, almost universally, wanted material organized based on frequency of access.

While it may come as no surprise that students think primarily in terms of their weekly schedule, it was interesting that faculty (at least anecdotally) did not consistently share this perspective. In fact, the pre-conceived notions of the planning committee (that students would prefer organizing content by discipline and then by medium) were, essentially, debunked by the data obtained from student participants. This may simply reflect the differences in overall schedules between faculty and students, or it may reflect a difference in the mental models of novices (content users) and experts (content producers).

These unexpected results, as well as the verbal feedback (complaints) from Cohort B students during their first year in the new curriculum, encouraged the second card sort. The planning committee became increasingly concerned that, even though students provided the data from the initial open card sort, the lack of experience with an integrated curriculum might have led to an inability in Cohort A participants to reliably predict logical information architecture for the new curriculum. Although Cohort B students were able to use the initial course sites throughout their first year in the new curriculum, the second card sort allowed the curriculum planning committee to make modifications to the template site before several years of sites were built.

It is possible that differences in organization identified between the two cohorts reflect the differences in curricula experienced by these two participant pools (traditional vs. integrated). For example, the Cohort B participants, exposed to an integrated curriculum with a focus on case/scenario-based learning, were much more accepting of supplemental resources and tended to integrate materials according to case/scenario rather than by discipline. This last point is conjecture at present; a larger study with an experimental design would be necessary to determine whether exposure to different curricula impact the process of organizing content. A larger, experimental study would also be required in order to determine whether the actions of consuming or producing content (differences between students and faculty, for example) impact content organization, as well. Although this case study cannot address these more broadly reaching questions, the results presented here do reveal that experience with the new, integrated curriculum encouraged Cohort B students to request additional modifications in organization of sites. As the Cohort B participants more accurately reflect actual site users, the Cohort B semi-closed card sort results provided valuable insight into a logical navigation for this population. The Cohort A open card sort and usability test provided an excellent starting point and enabled us to put together an initial template site that could lend consistency across all the first year biomedical sciences course sites. The Cohort B semi-closed card sort and usability test allowed us to refine that template and make numerous modifications. This second step was essential to ensure that the course sites reflect the integrated structure of the new curriculum as much as possible and to provide an information architecture that is consistent with the expectations of the primary site users.

While the most recent cohort of students in the program, the cohort following Cohort B, appear to be experiencing minimal difficulties interacting with the modified course sites, it is important that we continue to monitor the performance of the sites for incoming students.

Recommendations

As mentioned above, further research is needed to assess the impact that an integrated system has on student perceptions of optimal site organization and how students construct relationships among academic resources. While faculty members encourage students to approach conceptual material in innovative and integrated ways, is it possible that students may think about organizing content and concepts differently as a result? If so, how do we best accommodate this? While it is possible that changing curricula may lead to changing mental models regarding how specific pieces of content relate to each other, larger-scale, quantitative studies are necessary to explore this hypothesis in any significant way.

This study specifically strove to address the challenges of course site use for students, the primary site users; however, the curriculum planning committee also identified challenges related to faculty use of course sites. Future studies will need to address faculty preferences and expectations for site organization.

References

- Anderson, S. W., Damasio, H., Jones, R. D., & Tranel, D. (1991). Wisconsin card sorting test performance as a measure of frontal lobe damage. Journal of Clinical and Experimental Neuropsychology, 13(6), 909-922. doi: 0.1080/01688639108405107

- Berg, E. A. (1948). A simple objective technique for measuring flexibility in thinking. Journal of General Psychology, 39(1), 15-22. doi: 10.1080/00221309.1948.9918159

- Bergström, J. A., (1893). Experiments upon physiological memory. American Journal of Psychology, 5, 356-359.

- Block, J. (1961). The Q-sort method in personality assessment and psychiatric research (pp.3 26). Springfield, IL: Charles C. Thomas.

- Brown, S. R. (1996). Q methodology and qualitative research. Qualitative Health Research, 6(4), 561-567. doi: 10.1177/104973239600600408

- Bunch, M., & Rogers, M. (1936). The relationship between transfer and the length of the interval separating the mastery of the two problems. Journal of Comparative Psychology, 21(1), 37-52.

- Hudson, W. (2012). Card sorting. In Soegaard, Mads and Dam, Rikke Friis (Eds.), Encyclopedia of human-computer interaction (chapter 22). Aarhus, Denmark: The Interaction-Design.org Foundation. Retrieved August 25, 2012 from http://www.interaction-design.org/encyclopedia/card_sorting.html

- Jastrow, J. (1898). A sorting apparatus for the study of reaction-times. Psychological Review, 5(3), 279-285. doi: 10.1037/h0073343

- Lewis, K. M., & Hepburn, P. (2010). Open card sorting and factor analysis: A usability case study. The Electronic Library, 28(3), 401 – 416. doi: 10.1108/02640471011051981

- Nielsen, J., & Sano, D. (1995). SunWeb: User interface design for Sun Microsystem’s internal web. Computer Networks and ISDN Systems, 28(1-2), 179-188. http://dx.doi.org/10.1016/0169-7552(95)00109-7

- Paul, C. L. (2008). A modified delphi approach to a new card sorting methodology. Journal of Usability Studies, 4 (1), 7‐30. Retrieved from http://upassoc.org/upa_publications/jus/2008november/paul1.html

- Roth, R.E., Finch, B.G., Blanford, J.I., Klippel, A., Robinson, A.C., & MacEachren, A.M. (2011). Card sorting for cartographic research and practice. Cartography and Geographic Information Science. 38(2),89-99.

- Spencer, D. (2009). Card sorting: Designing usable categories. Brooklyn, NY: Rosenfeld Media.

- Stephenson, W. (1953). The study of behavior: Q-technique and its methodology. Chicago: University of Chicago Press.